Digital transformation – ever heard of it? Its a term we have heard over and over again in marketing hype. Most businesses offer their goods and services through digital means today called eCommerce. But delivery is a different matter. Physical redemption of digital orders is difficult and fraught with fraud. This is why physical coupons are still used at the supermarket. How about how coupon codes for eCommerce sites are being shared on the internet? Enterprises want the speed and ease of digital but with the control of physical. This is where tokenization comes into place.

Tokenization in a nutshell

Tokenization is where you wrap a web3 NFT around the redemption of a physical good or service. Think of it as a digital IOU. This allows businesses to sell at demand. Then “delivery” is split. The consumer gets a token to be redeemed later. Then the redemption can occur when the physical good is available. Redemption can be a simple CRM integration.

Separating sale and delivery in this way provides flexibility in the business model. This concept works on public or private blockchain. It is universally available today. The downside is trust – the consumer must trust the brand to deliver. This is what plagues the current business models of kickstarter and indiegogo. Web3 addresses that this by having authorship listed. Consumers can know if tokens are legit. Brands need to use web3 standards and legal documentation to address consumer trust.

Business Case for 100% Digital

Going fully digital means the opportunity to automate. Look at during the recent pandemic. Digital service offers like zoom skyrocketed in value. They offered a 100% digital delivered product; scaled in the cloud and sold online on-demand. The results were a great product meeting the market opportunity fully. How many sales do you lose because it took too long to get back to the customer? How many consumers give up because delivery time is too long. With tokenization, you can meet your market demand on-demand like zoom. No matter your product or service.

Why Web3? Because of Napster

As the consumer must trust the brand, you must trust the method by which you are tokenizing your value. You do not want to forget the lessons from the music industry of the 90s. Napster and mp3s created enormous pain and obstacles towards digital transformation. Without the control, you could be held liable for scammers and pirates. There is no point in creating offerings in the digital domain, if you cannot be sure you will get paid for it. Web3 has the bi-directional trust built in to ensure you control what you create.

My favorite use case: Pre-order

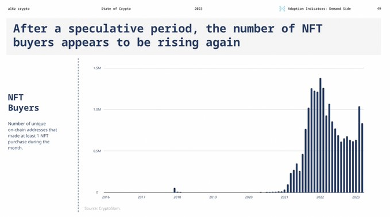

Preorder is something that many brands fail to take advantage of. Kickstarter and Indiegogo are popular examples of this model. Consumers can buy an idea with the promise that the brand will deliver the product once its manufactured. This sense of community and sponsorship has propelled these services to bootstrap many startups. Tesla does preorders all the time. Cybertruck orders are in their 4th year. That is a lot of consumer trust! I have talked to a major auto manufacturer. They told me that 21% of pre-orders fall through due to consumers getting impatient.

Web3 and tokenization can offer key benefits and communication to keep consumers engaged. Perks and loyalty plays can enable better revenue retention. Web3 makes this use case work better, because brands and consumers have trust in the infrastructure. I see this trust as instrumental to the success of tokenization. Just like SSL changed the game in eCommerce, Web3 will change the game in digital transformation. The question is what game do you want to change?

What Tokens Do You Want to Sell?

Tokenization is a powerful way to digitally transform your business offers. Web3 provides the trust and flexibility needed for digital transformation. You can confidently explore new business delivery models used in the marketplace today. Building tokens is easy on web3. Managing your web3 assets can be tricky. Do not be afraid to ask for help. With proper guidance and support, you can find your north star in tokenization. Hit me up on messages, I would love to hear about how tokenization can help your business.