Achieving Technology Escape Velocity with AI

A Strategic Guide for CIOs to Convert Technical Debt into Technical Wealth

The Gravity of the Status Quo

In the relentless physics of the 2026 enterprise, the primary inhibitor of corporate growth is no longer a lack of capital or a scarcity of ideas—it is Infrastructure Gravity.

For decades, the IT organization has been treated like an ancestral home where new wings are added every few years without ever inspecting the foundation. Today, that foundation is crumbling under the weight of “patch-and-pray” legacy management. Most CIOs find themselves trapped in a crippling paradox: they are being pressured by the board to launch AI-powered rockets, yet their feet are cemented to a 20-year-old mainframe and a fragmented, “spaghetti” architecture.

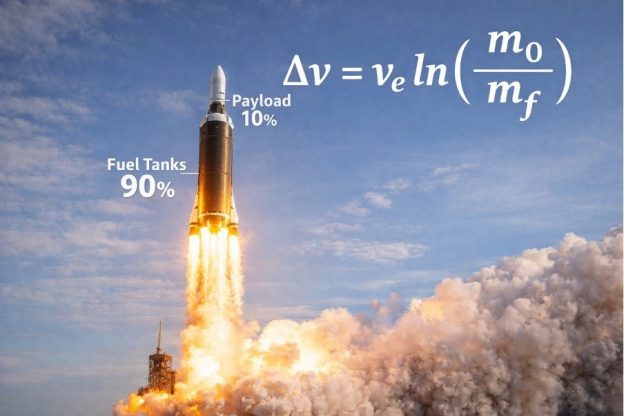

This is the Status Quo Trap. In this state, every dollar spent on innovation is diluted by the “Interest” you are paying on your technical debt. We see it in every sector—organizations where 80% of the budget is consumed just to keep the lights on, leaving only 20% to fight for the future.

To achieve Technology Escape Velocity, you must generate enough “thrust”—measured in efficiency, resilience, and speed—to break free from the gravitational pull of your legacy environment. This requires a fundamental shift in how we define the value of IT.

Technical Debt is a high-interest, predatory loan that makes you a slave to the past. Technical Wealth is an appreciating asset that makes you the architect of the future.

Wealth isn’t just about having the latest tools; it is about Consumable Change. If your modernization efforts are so complex that your people cannot absorb them, you aren’t building wealth; you’re just moving the debt to a different ledger. True Escape Velocity happens when you simplify the core so radically that your organization can pivot as fast as the AI models it seeks to deploy.

The Infrastructure Archaeology Problem

In 2026, most IT environments are not “designed” in the modern sense; they are accumulated. We call this Infrastructure Archaeology.

When you peel back the layers of a typical Tier-1 enterprise stack, you aren’t looking at a cohesive strategy; you’re looking at a physical record of the last thirty years of tech trends. You have the 1990s-era mainframe core handling transactions, the 2000s-era “monolithic” Java middleware, the 2010s “lift-and-shift” cloud instances, and now, a chaotic 2020s layer of disconnected AI pilots.

The Complexity Tax

This fragmentation creates a Complexity Tax—a hidden, compounding interest rate on every new initiative. Because these systems were never built to talk to one another, every “innovation” requires a monumental effort in manual data movement, custom API “duct tape,” and brittle integrations.

Recent research indicates that by 2026, 65% of organizations find their AI environments too complex to manage. The result? Over half of all AI initiatives are either delayed or canceled—not because the AI models failed, but because the “data layer” underneath them was an unnavigable labyrinth.

The Firefighter’s Dilemma

This “Archaeological” state traps your team in a permanent state of Firefighting. When your infrastructure is a patchwork of disconnected silos, visibility is fragmented. When a failure occurs, the root cause could be anywhere in thirty years of code.

- Alert Fatigue: Teams are inundated with thousands of signals across dozens of siloed monitoring tools, making it impossible to distinguish a “glitch” from a “catastrophe.”

- Talent Attrition: Your top-tier engineers didn’t sign up to be digital janitors. When they spend 80% of their time patching “black box” legacy systems they didn’t build, they burn out or leave for AI-native competitors.

- The Innovation Ceiling: Complexity creates a hard ceiling on velocity. If you are spending your entire budget on “staying alive,” you have zero fuel left to “speed the plow.”

To achieve Escape Velocity, you must stop being an archaeologist and start being a deconstructionist. You cannot build a 2026 business on a 1996 foundation.

The AI Unlock – A New Physics for IT

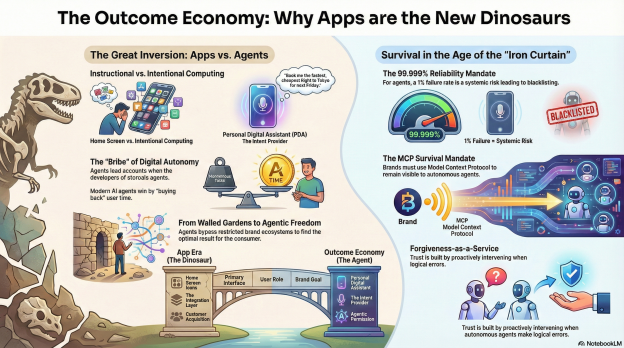

The emergence of Agentic AI has fundamentally changed the “physics” of IT modernization. Historically, refactoring legacy code was a linear, manual process that scaled only with the addition of specialized (and expensive) human labor. In 2026, we have moved into the era of Autonomous Re-engineering, where AI agents act as digital architects rather than simple autocomplete tools.

From “Copilot” to “Agent”

While 2024 was defined by “AI Copilots” that assisted developers with snippets of code, 2026 is defined by Agents that take ownership of end-to-end modernization workflows. These agents don’t just suggest a line of code; they perceive the entire system, plan a multi-step migration, act across diverse tools, and learn from the results.

- Deep Reasoning and Dependency Mapping: AI agents can “ingest” a million lines of legacy COBOL or Java and, within hours, produce a lossless semantic map of the business logic. They identify the “logic bombs” and hidden dependencies that have terrified human developers for decades.

- Surgical Refactoring: Instead of a risky “rip and replace,” AI allows for surgical precision. It can break a 30-year-old monolith into cloud-native microservices while automatically rewriting algorithms to be “Green”—optimizing for CPU cycles and memory usage to lower cloud bills by 15–25%.

The Universal Safety Net

The primary reason modernization roadmaps stall is fear. Fear of the “Unknown-Unknowns”—the one line of undocumented code that, if changed, brings down the entire global supply chain. AI provides the first-ever “Universal Safety Net” to neutralize this fear:

- Automated Test Generation: AI agents can analyze a legacy module and automatically generate comprehensive unit and regression tests with 90%+ accuracy. This creates a “gold standard” for system behavior before a single line of code is modernized.

- Simulated Migrations: Before deploying to production, AI agents can run thousands of “What-If” scenarios in a digital twin of your infrastructure, identifying potential bottlenecks or security vulnerabilities in a sandbox environment.

Acceleration: Months, Not Years

By leveraging agentic workflows, the timeline for a legacy turnaround is no longer measured in years. Enterprises are now completing full-scale system modernizations 30–50% faster and at 30% lower costs. This acceleration is the “rocket fuel” required to reach escape velocity. It allows you to shift your budget from “maintaining the past” to “building the future” in a single fiscal cycle.

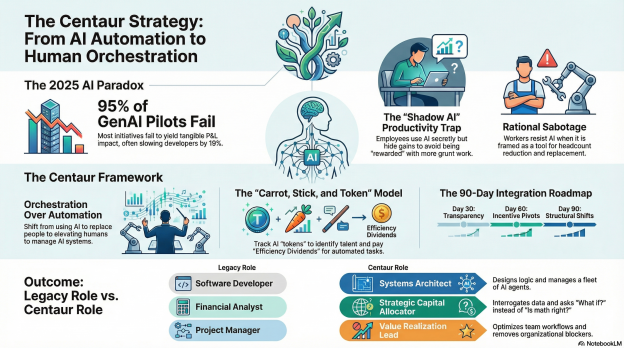

Empowering the Human Navigator

Modernization in 2026 is no longer a purely technical hurdle; it is a human-centered orchestration. To achieve Technology Escape Velocity, the crew must be ready to fly a different kind of ship. The most common point of failure in IT turnarounds today is not the code—it is the Consumption Gap: the distance between the speed of technology and the ability of your people to absorb it.

The Great Re-Promotion: From Builder to Orchestrator

In the legacy era, a developer’s value was tied to their ability to write syntax and manage procedural control. In the Agentic era, every engineer has effectively been “re-promoted” to a manager. They are no longer just authors of logic; they are AI Development Orchestrators.

- The Auditor Mindset: Training has shifted from “How to Code” to “How to Audit.” Your senior engineers now act as “Editors-in-Chief,” presiding over high-volume code outputs generated by AI agents to ensure architectural integrity and security.

- Mastery of Intent: The new core competency is Prompt and Context Engineering. Success is measured by an engineer’s ability to describe complex business logic in natural language or declarative specs that an AI agent can translate into high-performance, cloud-native modules.

The Cultural Safety Net: Consumable Change

Resistance to AI adoption is rarely about laziness; it is almost always about fear of replacement or fear of the unknown. For change to be “consumable,” leaders must bridge the gap between “Industrial Age” operations and “Agentic Age” possibilities.

- Killing the Firefighting Cycle: Buy-in occurs when employees see that AI is not coming for their job—it is coming for the parts of their job they hate. By delegating “digital janitorial work” (patching, documentation, T1 tickets) to agents, you release human potential for high-value strategic work.

- The “Safety to Fail” Sandbox: Forward-thinking CIOs are building Modernization Academies. These aren’t just training rooms; they are sandboxes where legacy engineers can use AI agents to “break” copies of old systems, learning how to steer the AI without risk to production.

Systems Thinking vs. Task Thinking

The 2026 workforce must unlearn “Task Thinking”—the focus on closing an individual ticket—and embrace Systems Thinking. This is the ability to see how autonomous agents, legacy databases, and human oversight connect to form an adaptive network.

“The most successful engineers won’t just write code; they’ll architect solutions and leverage AI as a collaborator rather than a competitor. This isn’t about augmentation; it’s about the reinvention of engineering excellence.”

The Triple Mandate – Efficiency, Resilience, Velocity

In the strategic landscape of 2026, the luxury of choosing between cost-cutting and innovation has vanished. To achieve Technology Escape Velocity, a modernization turnaround must be a “triple-threat” play. You cannot compromise on one without stalling the entire mission.

As a consultant to the C-suite, I remind leaders that “speeding the plow” requires balanced force. If you push for speed but ignore resilience, you create a catastrophic “debt explosion.” If you focus only on efficiency, you become a low-cost laggard. You must hit all three targets simultaneously.

- Efficiency: Lowering the “Unit Cost of Innovation”

In 2026, efficiency is no longer about just “doing more with less”; it is about algorithmic optimization.

- Cloud Rightsizing: Using AI to move workloads dynamically between public, private, and edge environments based on cost and performance in real-time.

- Legacy De-layering: Removing the “middleman” software that has accumulated over decades. Every layer of middleware you remove is a direct injection of capital back into your innovation budget.

- Resilience: From Disaster Recovery to Self-Healing

Resilience in the Agentic era is proactive, not reactive. Organizations that reach escape velocity move away from “Firefighting” and toward Anticipatory IT.

- Predictive Failure Analysis: AI agents monitor infrastructure patterns to predict a hardware or database failure before it happens, rerouting traffic and spinning up redundancies autonomously.

- Automated Security Patching: In a world of AI-driven cyber threats, manual patching is a death sentence. Resilience means having an infrastructure that identifies vulnerabilities and patches itself at machine speed.

- Velocity: Shrinking the “Idea-to-Inference” Gap

Velocity is the ultimate metric of technical wealth. It is the speed at which a business requirement becomes a deployed, consumable reality.

- Removing the QA Bottleneck: By using AI to automate 100% of testing and documentation, you remove the primary “friction points” that slow down traditional roadmaps.

- Continuous Modernization: Instead of one massive “migration project,” velocity is achieved by treating modernization as a background process—like a heartbeat—that constantly refines and updates the stack.

The Synergy of the Mandate

When these three forces align, they create a self-funding loop. The Efficiency gains (reclaimed cloud spend and reduced maintenance) provide the “fuel” for Velocity (new features), while Resilience ensures the “rocket” doesn’t explode on the launchpad.

“You cannot out-innovate a broken foundation. Efficiency buys you the time, Resilience buys you the trust, and Velocity buys you the market.”

Quick Wins 1–5 – The First Stage Boosters

To break the gravitational pull of a legacy environment, you cannot wait for a three-year “transformation” to bear fruit. You need immediate, visible results to fund the journey and prove the methodology to the Board. These first five “Quick Wins” serve as your first-stage boosters: they jettison weight, reclaim fuel, and provide the initial thrust needed for liftoff.

- AI-Automated Testing: The Instant Safety Net

The single biggest reason CIOs hesitate to modernize is the “fear of the break.” Use AI to analyze your most critical “black box” legacy applications and automatically generate comprehensive unit and regression tests.

- Roadmap Impact: This creates a safety net in weeks that would have taken years to build manually. It reduces future QA bottlenecks by 40–60%, allowing you to refactor with confidence.

- “Zombie” Asset Reclamation: Finding Hidden Fuel

Deploy AI Discovery agents across your hybrid cloud and on-prem environments to identify “zombie” infrastructure—servers, storage, and cloud instances that are running but providing zero business value.

- Roadmap Impact: Most enterprises find 15–20% in immediate OpEx savings. This isn’t just a cost-cut; it is reclaimed capital that can be directly re-invested into Stage 2 modernization.

- Agentic Documentation: Ending the Knowledge Debt

Use LLMs to “read” and document your oldest, most brittle codebases. AI can translate thousands of lines of undocumented COBOL or legacy Java into plain-English business logic and architectural diagrams.

- Roadmap Impact: This eliminates the “Single Point of Failure” risk associated with retiring staff. It turns a “tribal knowledge” system into a documented, Technical Wealth asset.

- API Micro-Wrapping: Decoupling the Speed of Business

Instead of trying to replace a massive core system on day one, use AI to build modern API “wrappers” around it. This allows your mobile and web teams to access legacy data through modern protocols.

- Roadmap Impact: You decouple the “Speed of Innovation” from the “Speed of the Core.” Your customer-facing teams can move at 2026 speeds while the multi-year back-end refactoring happens safely in the background.

- Cloud-Sovereignty Audit: Preempting the Compliance Tax

In 2026, data privacy regulations have become hyper-localized. Use AI agents to scan your data locations and ensure they align with the latest sovereignty laws.

- Roadmap Impact: This “Modernization by Compliance” prevents massive fines and avoids the “Emergency Migration” that occurs when a regulator shuts down a data pipe. It ensures your infrastructure is legally resilient.

Once the initial “boosters” have cleared the path, you must apply maximum pressure to the remaining friction points. These five wins focus on operationalizing the “Agentic” shift, moving from discovery to active, autonomous management of your technical wealth.

- Predictive Patching: Ending the Firefighting Cycle

Deploy AI-driven vulnerability management that doesn’t just scan for threats but predicts where legacy systems are most vulnerable based on real-time global threat intelligence.

- Roadmap Impact: By automating the patching of legacy environments that were previously “too risky to touch,” you close the security debt gap and reclaim 30% of your security team’s bandwidth from emergency response to proactive architecture.

- The 1-Module Translation: The Proof of Concept

Identify a single, non-mission-critical legacy module and use an AI-agentic workflow to port it entirely to a modern language (e.g., from COBOL to Python).

- Roadmap Impact: This serves as a “pilot light” for the entire organization. It proves the methodology works, provides a benchmark for “Mean Time to Modernize” (MTTM), and builds the internal confidence needed for large-scale “Stage Separation.”

- FinOps 2.0: AI-Integrated Cost Governance

In 2026, AI compute is the most expensive line item. Implement FinOps 2.0 tools that use AI to auto-tune workloads—spinning down high-cost GPU instances the micro-second they aren’t needed.

- Roadmap Impact: Prevents “Inference Bill Shock.” By keeping the cost-per-outcome low, you ensure the modernization project remains self-funding and “Board-proof.”

- Data Pipeline Scrubbing: Ensuring “AI-Readiness”

Use LLMs to act as a “data janitor,” scanning messy legacy databases to structure, de-duplicate, and label data “at the source.”

- Roadmap Impact: Most AI projects fail because of bad data. By modernizing the data layer first, you shorten the deployment time of every future AI feature by months, ensuring the “plow” never hits a stone.

- Shadow IT Discovery: Securing the “Wild West”

Use AI agents to crawl your network and surface unsanctioned SaaS and “Shadow AI” tools being used by departments.

- Roadmap Impact: Instead of banning these tools, you bring them into the governed infrastructure. This reduces hidden risk (Security Debt) and allows you to consolidate licenses, reclaiming wasted spend for the core roadmap.

Measuring the Thrust – The Technical Wealth Index

In the pursuit of Escape Velocity, you must stop using 20th-century metrics like “Server Uptime” or “Number of Tickets Closed.” These are maintenance metrics; they measure how well you are staying still. To measure progress toward Technical Wealth, you need a new dashboard.

- The Innovation Ratio (The Velocity Metric)

What percentage of your total IT budget is spent on Future Work (new features, market differentiation) vs. Legacy Maintenance (patching, keeping the lights on)?

- Target: Move from the typical 20/80 split to a 70/30 Wealth Split within 12 months.

- Mean Time to Modernize (MTTM)

How long does it take to identify a legacy component, map its dependencies, and refactor it into a modern, consumable service?

- Target: Reduce MTTM from quarters to weeks using agentic workflows.

- Inference Efficiency (The AI Cost Metric)

What is the infrastructure cost-per-AI-inference? As you modernize, this cost should trend downward even as usage scales.

- Target: Achieve a 25% year-over-year reduction in cost-per-inference through infrastructure optimization.

- Change Absorption Rate (The Human Metric)

How quickly can your engineering staff pivot to a new AI tool or infrastructure change? This measures the success of your “Consumable Change” training.

- Target: High staff sentiment scores regarding “tooling effectiveness” and a reduction in “training-to-deployment” lag time.

The “Wealthy” Organization vs. The “Indebted”

As we navigate 2026, the market has become binary. There is no longer a middle ground for “average” IT performance. Organizations either achieve Technology Escape Velocity or they are pulled back into a terminal descent by their own complexity.

The Profile of the “Indebted” Organization

- The Strategy: Treats modernization as a “one-off” project with a fixed end date.

- The Behavior: They continue to “out-hire” their problems, adding more human labor to manage increasingly complex legacy systems.

- The Outcome: The “Complexity Tax” eventually exceeds the innovation budget. During a market shift or a cyber-event, the infrastructure is too brittle to pivot. They are forced into a “Rip and Replace” scenario that costs 5x more than a planned turnaround.

The Profile of the “Wealthy” Organization

- The Strategy: Sees infrastructure as a modular, self-optimizing asset.

- The Behavior: They leverage Agentic AI to handle the “janitorial” IT tasks, freeing their humans to focus on high-value business logic.

- The Outcome: Because their foundation is lean, they absorb new AI models in days. Their Consumable Change rate is high, and their “Unit Cost of Innovation” is the lowest in their peer group. They don’t just survive the “speeding plow”; they drive it.

The 12-Month Flight Plan

Achieving escape velocity is not about a single leap; it is about a series of calculated stages. For the CIO/CTO, this is the 12-month roadmap to reclaiming control.

Phase 1: Ignition (Months 1–3)

- The Audit: Deploy discovery agents to map the “archaeological site” and identify all “zombie” assets.

- The Foundation: Implement Quick Wins 1–3 (Automated Testing, Asset Reclamation, and Agentic Documentation).

- The Human Element: Launch the “Modernization Academy” to begin upskilling engineers from coders to orchestrators.

Phase 2: Liftoff (Months 4–6)

- The Decoupling: Execute Quick Wins 4–6. Use API wrapping to unblock the business and implement predictive patching to stabilize the core.

- The Proof of Wealth: Complete the “1-Module Translation” (Quick Win 7) to prove the AI-modernization methodology to the board.

Phase 3: Stage Separation (Months 7–9)

- The Heavy Lift: Rearchitect your most critical “monolith” using agentic refactoring.

- The Optimization: Implement FinOps 2.0 (Quick Win 8) and Data Scrubbing (Quick Win 9) to ensure your AI infrastructure is cost-effective and ready for scale.

Phase 4: Orbit (Months 10–12)

- Continuous Wealth: Transition from “Modernization Projects” to a Continuous Modernization Office.

- Governance: Execute Quick Win 10 to bring Shadow IT into the fold.

- Result: By Month 12, the savings from Phase 1 and 2 are fully funding the innovation of Phase 4. You have reached escape velocity.

Closing Thought: The plow is moving. You can either be the one steering it, or the one it’s moving toward. It’s time to achieve Escape Velocity.